How RAG makes technical documentation actually useful

Most documentation search works like this: a user types "how do I configure webhooks," gets 15 results sorted by keyword relevance, and has to read through each one to find the answer. RAG (retrieval-augmented generation) changes this. Instead of returning a list of links, it retrieves the relevant documentation chunks and generates a direct answer.

This post explains how RAG works, why it's better than traditional search or standalone LLMs for documentation, and what to consider when implementing it.

What RAG actually does

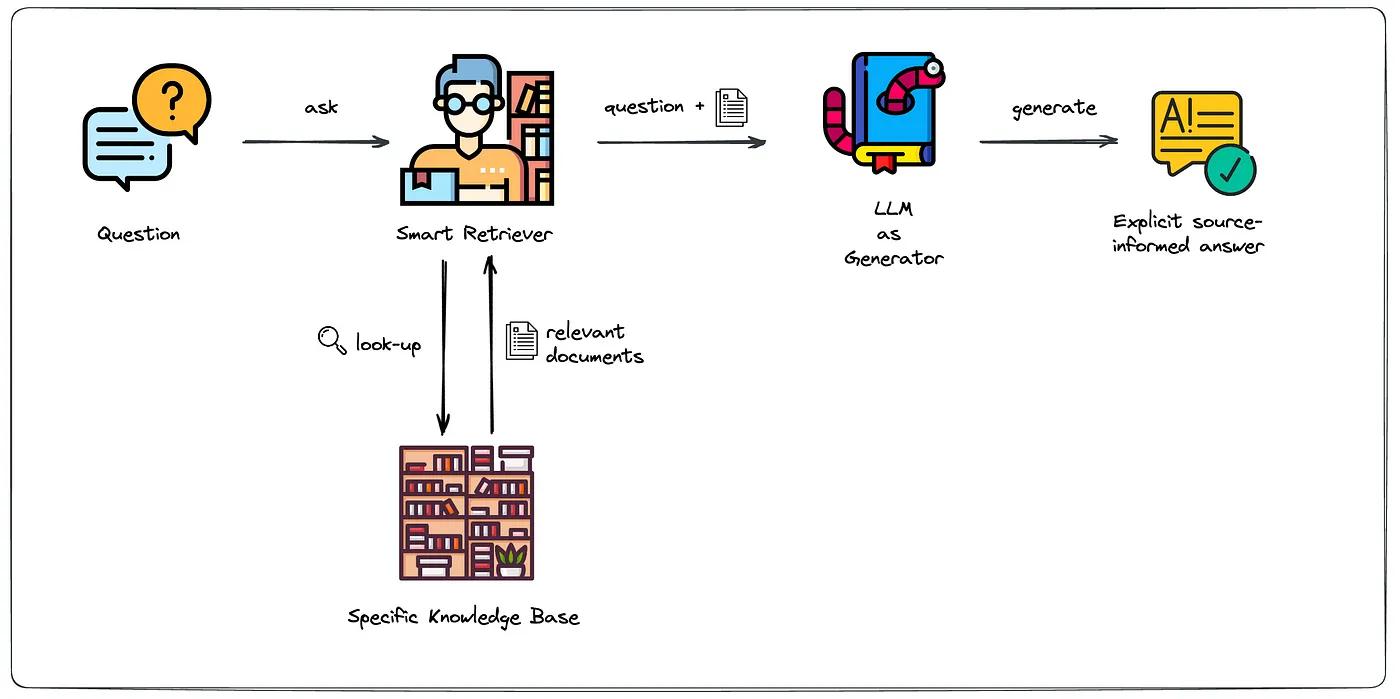

RAG combines two steps into a single pipeline:

Image from Leveraging LLMs on your domain-specific knowledge base

- Retrieval. The system takes the user's question, converts it into a vector embedding, and searches a pre-indexed database of your documentation. It returns the most relevant chunks (typically 3-10 passages).

- Generation. An LLM (GPT-4o, Claude, etc.) receives those chunks as context and generates a coherent answer. The model doesn't guess. It synthesizes from your actual content.

The result: users get a specific answer with citations back to your docs, not a generic response based on training data.

Why not just use an LLM directly?

A standalone LLM like GPT-4o can generate fluent text, but it has three problems for documentation:

- Stale knowledge. The model only knows what was in its training data. Your latest API changes aren't there.

- Hallucination. Without source material to ground it, the model can confidently state things that aren't true about your product.

- No citations. Users can't verify where the answer came from.

RAG solves all three. The retrieval step ensures the LLM works from your current documentation. The generation step produces a readable answer. And because you know which chunks were retrieved, you can show source links alongside the response.

How it works in practice

A typical RAG pipeline for documentation looks like this:

- Crawl and chunk. Your documentation pages are fetched (via sitemap, URL, or file upload) and split into chunks. A common setup is 500-1000 token chunks with some overlap between them.

- Embed. Each chunk is converted to a vector using an embedding model (e.g., OpenAI's text-embedding-3-small). These vectors capture semantic meaning, not just keywords.

- Index. The vectors are stored in a vector database (Qdrant, Pinecone, Typesense, etc.) alongside the original text and metadata (page title, URL, section heading).

- Query. When a user asks a question, the question is embedded using the same model, and the nearest vectors are retrieved.

- Generate. The retrieved chunks are passed to an LLM as context, along with the user's question. The model generates an answer grounded in those specific passages.

Self-hosted vs. hosted RAG

You can build this yourself or use a hosted service. Here's the tradeoff:

Building your own (with LangChain, LlamaIndex, etc.):

- Full control over every component

- Can customize chunking, retrieval, and prompts

- Requires engineering time to build, maintain, and scale

- You handle embedding costs, vector DB hosting, and LLM API billing

Using a hosted service (like Biel.ai):

- Point it at your docs (sitemap URL, GitHub repo, or file upload) and it handles crawling, chunking, embedding, and indexing

- Working chatbot in minutes, not weeks

- Automatic re-indexing when your docs change

- You pay a monthly fee instead of managing infrastructure

For most documentation teams, a hosted service makes sense unless you have specific requirements around data residency or custom retrieval logic.

What to monitor after launch

RAG isn't set-and-forget. Track these metrics to know if it's working:

- Answer relevance. Are the retrieved chunks actually related to the question? Check this with thumbs up/down feedback on answers.

- Unanswered questions. What percentage of queries return no relevant results? These are documentation gaps you need to fill.

- Source coverage. Are all sections of your docs being indexed? Pages behind authentication, JavaScript-rendered content, or PDFs might be missed.

- Freshness. How often is your index updated? Stale indexes mean stale answers. Automate re-crawling after every docs deployment.

Common pitfalls

- Too-small chunks lose context. If a chunk is just a code snippet without the surrounding explanation, the LLM can't generate a useful answer.

- Too-large chunks dilute relevance. A 5000-token chunk that covers three topics will match loosely but answer poorly.

- Ignoring metadata. Page titles, section headings, and URLs help the retrieval step rank results. Strip them out and you lose precision.

- No feedback loop. Without user ratings on answers, you have no way to measure quality or catch regressions.

Conclusion

RAG turns your documentation from a static reference into an interactive assistant. Users ask questions in plain language and get answers grounded in your actual content, with links to verify.

If you want to try this without building the pipeline yourself, Biel.ai handles the full RAG stack for documentation sites. Set up takes about 15 minutes. Try it free for 14 days.