How to tell if your documentation chatbot is actually working

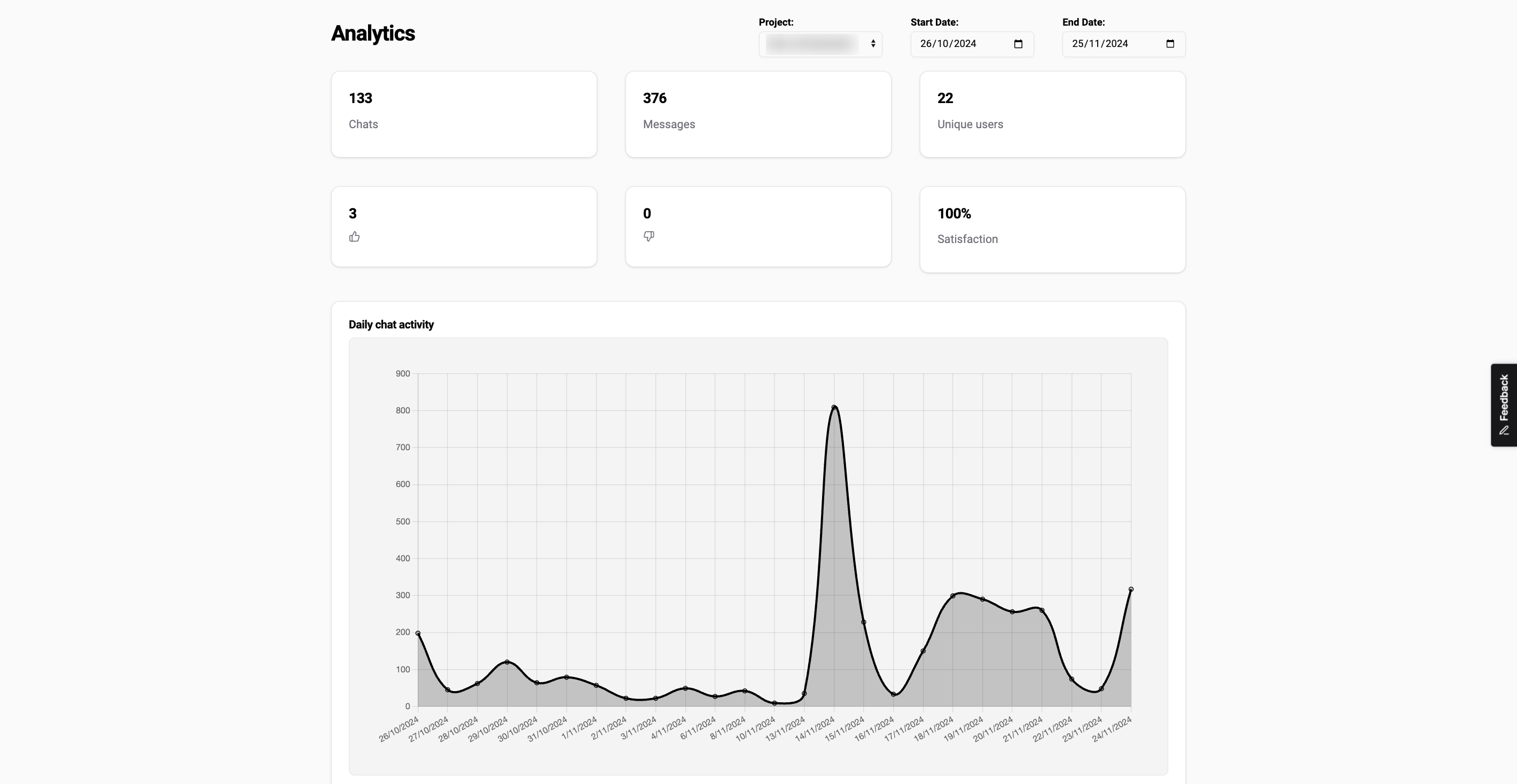

You deployed a chatbot on your documentation site. Users are clicking the widget. A few people have left thumbs up. Your support team thinks it's probably helping.

But is it actually working?

"It feels good" is not a success metric. Docs chatbots can appear useful while quietly failing users — answering confidently but inaccurately, covering only the easy questions, or missing entire sections of your content. This post covers the metrics that tell you what's really happening, how to read them, and what to do when the numbers look wrong.

Why "feels good" isn't good enough

A chatbot can generate fluent, confident-sounding answers about your product and still be wrong. Without measurement, you won't catch this until a user complains or files a support ticket that should have been resolved by the chatbot.

There's also a coverage problem. Early on, chatbots tend to handle the high-volume questions well — the ones that appear clearly in your docs. But users don't just ask the easy questions. They ask about edge cases, version-specific behavior, undocumented APIs. If you're only watching thumbs-up counts, you'll miss the 30% of questions the chatbot silently fails on.

The metrics that follow give you a structured view of quality across the things that actually matter.

The four metrics that matter

1. Answer relevance

Does the chatbot return answers that are actually about what the user asked? This isn't the same as accuracy — a relevant answer could still be incomplete. But relevance is the baseline.

The clearest signal here is your thumbs-up/thumbs-down ratio. In the first few weeks after launch, aim for 70% positive. After a month of iteration, 80%+ is a reasonable target. Below 60% is a sign the retrieval layer has problems: chunks are too small, pages aren't indexed, or the embedding model isn't finding the right content.

2. Faithfulness

Faithfulness measures whether the chatbot's answers stay grounded in your actual documentation — or whether it starts filling gaps by generating plausible-sounding text that isn't in your docs.

This one is harder to measure automatically. The most practical approach is a weekly spot check: pick 10-15 questions from your logs, run them against your chatbot, and verify each answer against the source documentation. Flag any answer where the chatbot asserts something you can't find in the docs. If you're seeing fabricated version numbers, invented API parameters, or incorrect pricing, that's a faithfulness failure.

3. Coverage rate

Coverage is the percentage of questions the chatbot can actually answer. If a user asks something and the chatbot returns "I couldn't find relevant information in the docs," that's an unanswered question.

Some unanswered rate is fine — users will ask things that genuinely aren't in your docs. But an unanswered rate above 20% in the first month suggests documentation gaps that need filling, or indexing problems that need investigating.

4. Deflection rate

Deflection measures how often the chatbot resolves a user's question without them escalating to human support. This is the business metric your stakeholders will care about most.

Measuring it cleanly requires connecting your chatbot analytics to your support ticket volume. If you get 400 docs-related tickets per month and that drops to 270 after chatbot launch, you've deflected roughly 130 tickets. At a typical $15-25 cost per ticket, the ROI becomes easy to explain.

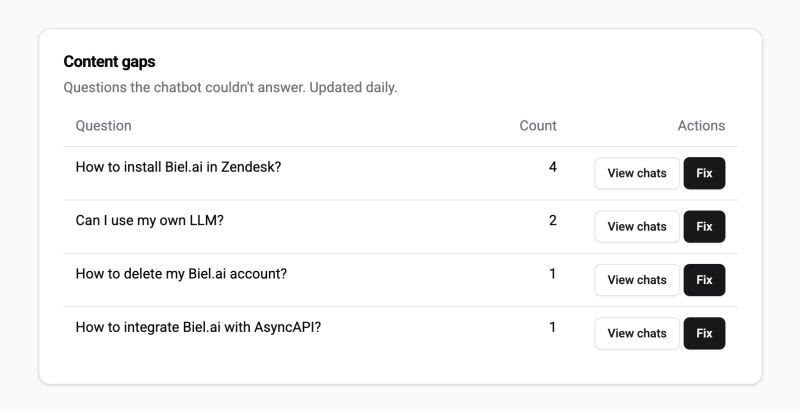

How to use Biel.ai's Content Gaps analytics

Biel.ai's analytics surfaces the unanswered and low-confidence questions in a dedicated Content Gaps view. This is the most useful part of the dashboard for documentation teams because it tells you exactly where to focus writing effort.

The view shows:

- Questions with no relevant results found

- Questions where results were found but users gave negative feedback

- Clusters of similar questions pointing to the same documentation gap

A practical workflow: check Content Gaps weekly, pull the top 5-10 recurring questions, and create or expand documentation pages to cover them. Re-index after each change. Track whether those questions move from "unanswered" to "answered" over the next two weeks.

This loop — measure gaps, fix docs, re-index, repeat — is what separates a chatbot that improves over time from one that plateaus.

Benchmarks: what good looks like

These are rough benchmarks based on typical documentation chatbot deployments. Your numbers will vary based on docs quality, user base, and question complexity.

First week:

- Thumbs-up rate: 65-75%

- Unanswered rate: 20-35% (expect this to be high; your docs have gaps you don't know about yet)

- Deflection rate: too early to measure reliably

First month (after iteration):

- Thumbs-up rate: 75-85%

- Unanswered rate: below 15%

- Deflection rate: 25-40% of support queries that could have been self-served

After three months:

- Thumbs-up rate: 80-90%

- Unanswered rate: below 10%

- Deflection rate: 35-50%

If you're consistently below these ranges after the expected iteration period, dig into the specific failure modes rather than assuming the chatbot isn't a good fit.

Red flags to watch for

High unanswered rate that isn't improving. If the unanswered rate stays above 20% after a month of adding docs, the problem might be indexing rather than content. Check whether all your pages are being crawled. Pages behind authentication, JavaScript-rendered content, and certain CMS setups can be missed.

Falling thumbs-up rate over time. If ratings were good at launch and are declining, your docs are probably going stale. The chatbot's index reflects your docs as of the last crawl. If you've updated the product but not re-indexed, users are getting outdated answers. Automate re-indexing to run after every docs deployment.

Repeated rephrasing of the same question. This pattern — a user asks a question, gets an unsatisfying answer, rephrases, asks again — shows up in session logs and indicates the chatbot understood the question but couldn't retrieve good results. It often means the relevant docs section uses different terminology than your users do. Adding terminology-aware content (or a glossary) helps.

Support ticket volume not moving. If deflection isn't improving, check whether the chatbot is actually visible to users. An embedded widget that's hard to find, slow to load, or dismissed as "just a bot" won't be used. Session counts in your analytics will tell you if the adoption problem is product rather than quality.

Setting up a monthly review cadence

A monthly 30-minute review is enough to stay on top of chatbot quality. Here's a simple structure:

- Pull last month's metrics. Thumbs-up rate, unanswered rate, total conversations.

- Check Content Gaps. What are the top 10 unanswered or low-rated questions?

- Spot-check 10 answers. Pick 10 questions from the logs and verify against your docs.

- Create a short list of docs to update. Prioritize the questions with highest frequency.

- Schedule a re-index after the updates are published.

This cadence keeps the chatbot improving without becoming a full-time job.

Where to start

If you haven't set up metrics at all yet, start with two things: enable user feedback (thumbs up/down) on every response, and check your unanswered rate in the analytics dashboard. Those two data points will tell you 80% of what you need to know in the first month.

If you're using Biel.ai, the analytics dashboard tracks all of this automatically. Content Gaps surfaces within a few days of launch, and the thumbs-up/thumbs-down feedback is enabled by default.

For more on building a chatbot that users actually trust, see How to improve user experience with AI chatbots on your documentation site. And for the technical foundation under all of this, How RAG makes technical documentation actually useful explains why retrieval quality drives most of the metrics described here.

The goal isn't a chatbot that feels good to have. It's a chatbot that you can prove is working — and that gets measurably better over time.